Abstract

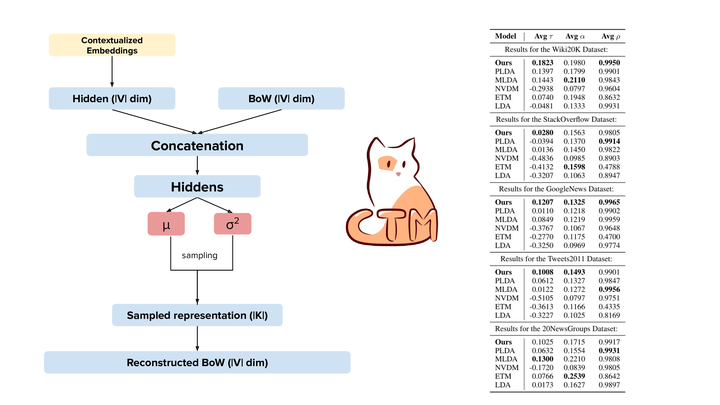

Topic models extract groups of words from documents, whose interpretation as a topic hopefully allows for a better understanding of the data. However, the resulting word groups are often not coherent, making them harder to interpret. Recently, neural topic models have shown improvements in overall coherence. Concurrently, contextual embeddings have advanced the state of the art of neural models in general. In this paper, we combine contextualized BERT representations with neural topic models. We find that our approach produces more meaningful and coherent topics than traditional bag-of-word topic models and recent neural models. Our results indicate that future improvements in language models will translate into better topic models.

Type

Publication

In Proceedings of the Conference of the Association for Computational Linguistics